Have you noticed that WAN design is changing? Well, I think it is, and I’d like to share my thoughts.

A Little History

Old TDM networks used to be “random” meshes of T1s, etc. Part of what drove the design was mileage-centric costs, so one would interconnect nearby sites and end up with a network that looked sort of like chicken wire fencing. Capacity planning was painful, as was multi-hops and latency. And the mesh-iness tended to aggregate traffic, thereby aggravating congestion and cost.

As Frame Relay then ATM came in, things gradually evolved to where they are today. We now tend to ignore distance, and just interconnect sites with MPLS or MetroEthernet WAN “clouds.”

One of the old rules of thumb was to home anything circuit-like to the datacenters; they generally don’t move or get replaced very often.

With MPLS, that became less of a concern. However, most applications moved to the datacenter (with the possible exception of portions of the federal government), so traffic flows were mostly datacenter-centric, excepting VoIP and other anomalies. That meant the WAN was also still datacenter-centric.

What’s Changed?

Two things:

- The apps (and sometimes the data) are moving to CoLocation datacenters or the cloud

- SaaS and cloud-based apps and managed services require efficient, lower latency internet access

Concerning that latter item, up until recently (#NFD13), I’d have said there were two current design approaches, but now I think there are three:

- Centralized internet access (one set of firewalls and security enforcement tools)

- Decentralized internet access (or every site for itself)

- Regionalized internet access

The trend is away from the centralized approach, except for geographically localized organizations.

Understanding the Implications

Increased business use of the internet and SaaS, etc., leads many to conclude they want each site to access the internet directly, the decentralized model. The problem one then runs into is the cost of putting a firewall and other security tools at each site, plus managing all that stuff, let alone backhauling log information to a central location.

That’s great if you’re building an IT security empire; not so good if your budget is constant or shrinking. The costs go down if you’re willing to use your router as a firewall and security tool. I rarely see that. More often I see firewalls (etc.) being used as border routers, which has its drawbacks (e.g. scaling IPsec VPN securely to many sites).

I’ll ignore DNS interception, whitelisting, and routing as a hybrid approach that just muddles the picture a bit. On another front, services like zScaler and Cisco’s Umbrella attempt to address the distributed hardware cost/management issues for you by providing cloud-based protections. That sort of works but can add latency.

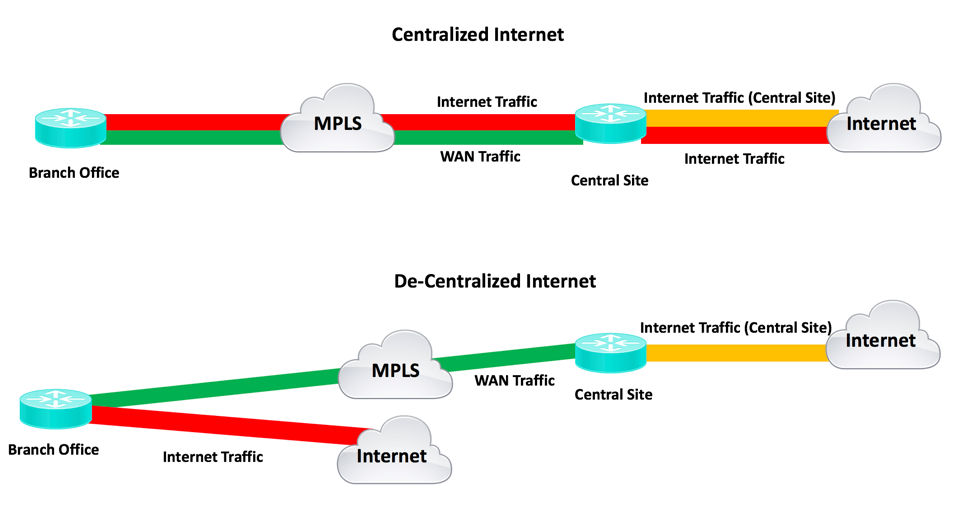

The problem with centralized internet access is that you back-haul all the internet traffic over your WAN, then provide egress to the internet. The consequence is bigger WAN links (internet plus datacenter traffic), and bigger central internet link.

Centralized internet does simplify some aspects of network security: fewer firewalls and security devices, fewer connection points to monitor.

The following diagram illustrates the bandwidth savings with decentralized internet:

The other consideration with the centralized approach is latency. Instead of taking the direct path from an end-user site to an internet business tool, traffic has to go to the central site, then to the tool. If the tool website uses a CDN (distributed content network), you’re pulling all those static graphics, etc. through your central site, rather than from somewhere near the end user. Slow!

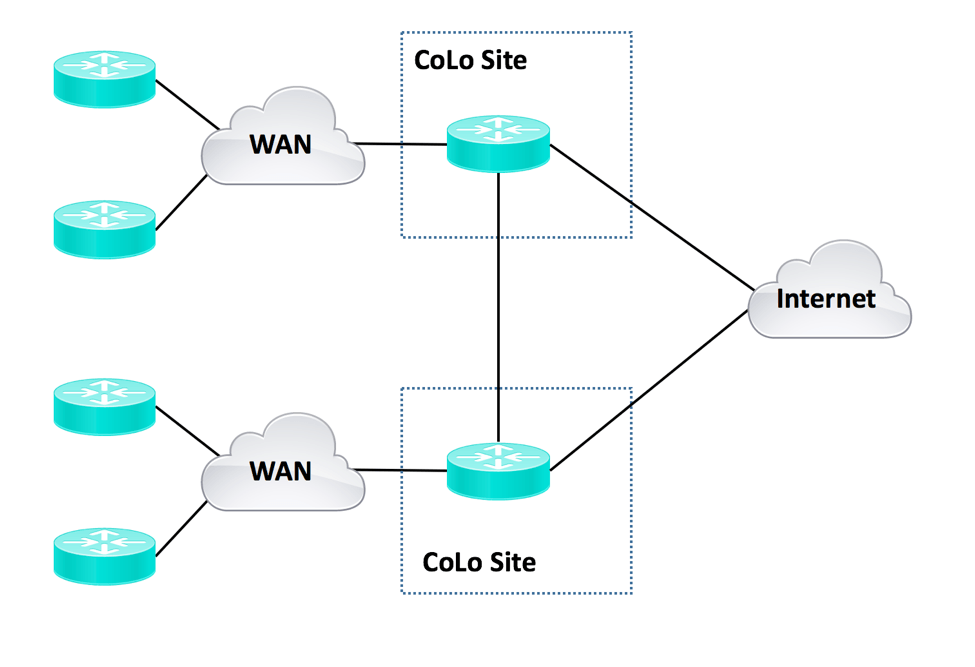

The regionalized internet approach tries to strike a balance between the two. There are two variations:

- Put hub routers into CoLo sites

- Put virtual hub routers in the cloud

The first of these is something Equinix and others excel at. One virtue of a good CoLo is inexpensive fast connections to various internet and cloud providers.

The second of these is the virtual equivalent of that. Challenges for the virtual version include limitations on the performance of a virtual router or security device, possible difficulty of getting inter-cloud provider connections, and cloud provider fees for egress traffic.

Here is what a regional approach might look like:

Datacenters would get connected to the regional hubs as well.

The regional hubs would get interconnected by higher speed links. Good CoLocation sites generally have inexpensive connectivity to many high-speed providers.

With regionalization, you do have some of the same extra WAN-to-internet factor as in the above centralized internet design. The overhead is just split between the regional locations. Latency should be lower, in general, than with centralized — assuming a wide geographic spread.

MPLS is a challenge for this: The routing is unaware of distances and latency. Unfortunately, your CE router cannot specify the cloud egress PE for traffic engineering to regionalize traffic. DMVPN or mGRE is one way to tackle that: You can do regionalized hub-and-spoke topologies on top of MPLS. This is what some SD-WAN vendors are promoting. IWAN can certainly do that, too.

If you have high-speed links between the CoLo sites, that provides some geographic modularity and hierarchy in your network.

Another approach would be separate regionalized MPLS VPNs. (Other alternatives are left as an exercise for the reader.)

If you are trying to regionalize MPLS, you need to be somewhat aware of some of your carrier’s topology. If you’re trying to get traffic from Miami to Tampa, you may not want it detouring through, say, Atlanta to get there. The caveat here is that we may be seeing new WAN design approaches, but they may not play well with older designs or technologies. It’s on you, the WAN designer, to take all that into account!

If you use Internet-based SD-WAN or IWAN, you control the tunneling. Regional hub and spoke is then a distinct possibility.

Right now, SD-WAN to some extent has an incentive to like the regionalized approach, and vendor slideware suggests that approach. See for instance the #NFD13 video sessions.

Here’s why: Regionalized CoLo-based SD-WAN allows you to supplement the SD-WAN network with firewall and security appliances in the regional CoLo. As SD-WAN vendors add NFV capability to run virtualized security and other services on the same platforms (appliance or virtual appliances), more decentralization may become preferred.

In the near term, performance will likely define a sharp line between where virtual appliances are suitable, and where physical devices are needed. As general purpose computers’ networking performance improves, the virtual versus physical router boundary seems likely to move upwards, meaning physical routers could become a shrinking market. Having woken the reader up by scaring them, let me hasten to add that traffic growth might offset that effect a good bit. In other words, my crystal ball is hazy on this topic.

MetroEthernet

One other WAN alternative is MetroEthernet. Generally, this is used as a single WAN interconnecting all sites, rather than using Ethernet Virtual Circuits (via VLAN tagging) for site-to-site circuit-like connections.

When using MetroEthernet, we recommend attaching all sites via Layer 3 routers or switches, since large Spanning Tree domains are a Really Bad Idea. If you attach via L3 switches (very tempting, cost saving), bear in mind you cannot do traffic shaping to sub-line rate contractual speeds. If you do that and your Metro Ethernet provider does policing, don’t expect good VoIP and video quality. The alternative is to spend more, and use routers to connect. That enables you to shape to near-end or far-end access speed.

One caveat: Routers attached to a single VLAN causes a mesh of routing adjacencies. This is not great (many routing neighbors), and you’ll want to watch the scaling. OSPF and IS-IS can be tweaked to be less chatty. Regionalizing such meshes might be a good idea, for scalability.

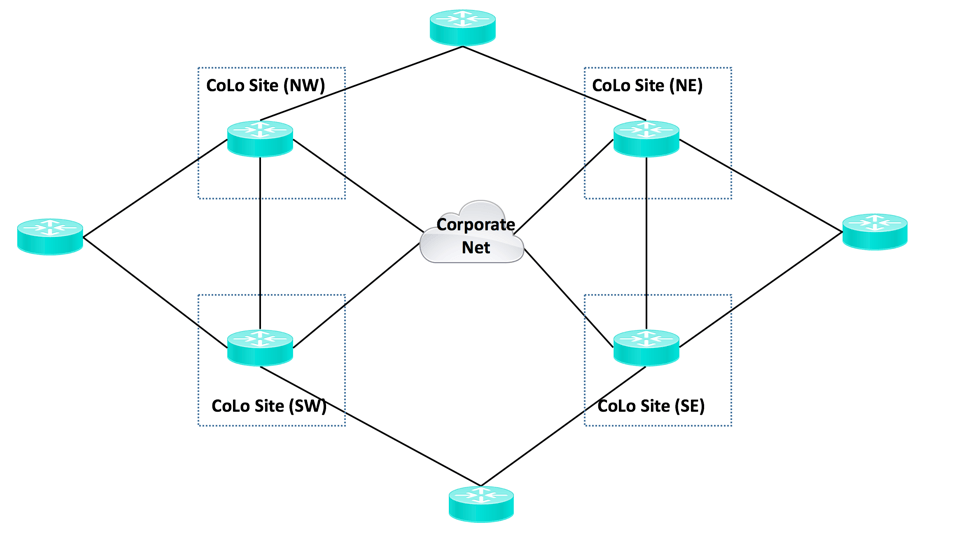

A NEWS Design

One regionalization approach is what I’ll call the NEWS approach (North, East, West, South). Here’s a version of that, where there is decentralized internet and the diagram shows DMVPN or possibly SDWAN access to a CoLo-based corporate backbone and corporate datacenters and perhaps firewalled corporate cloud-based applications (not shown). That begs the question of why not secure corporate apps by shifting to a SaaS model with a focus on individual access controls rather than a secured perimeter? (YABT: Yet Another Blog Topic.)

One might do this sort of thing for geo-diversity. It also would make the corporate backbone independent of datacenters, useful if the datacenters/locations might be subject to change.

How to make this work with IWAN’s PfRv3 is not immediately clear, therefore left as a thought exercise for the reader.

Other Notes

Per The Death of Transit? by Geoff Huston, cloud datacenter-centric backbones are rapidly becoming the biggest, lowest latency connections out there. On the other hand, you may well face costs for cloud-to-cloud data export.

Equinix touts their neutrality as to external connectivity: They have no backbone per se, but connect well to high-speed MPLS and other WAN providers, or to cloud providers. A regional Equinix CoLo strategy thus gets you “close” to some big pipes. See also “Equinix Performance Hub.”

Office 365 does geo-location of cached content based on DNS recursion and how the DNS recursion traffic gets to the internet. I see that as mostly being a question of where you put your DNS recursion in relation to your internet egress points. External-facing DNS in CoLo facilities (around the world!) is one possibility. This is a possible topic to revisit in detail at another time (blog).

A somewhat different topic is providing good customer experience for a retail or banking application, etc. There’s a case to be made that you want your website hosting location(s) to be directly connected to the ISPs serving your customer base, “getting ‘close’ to your customer.”

NetCraftsmen Services

Did you know that NetCraftsmen does network, security, and other forms of assessment? Our assessments can help spotlight current inefficiencies and vulnerabilities, and assist you in mapping out the future of your IT infrastructure. Learn more.

Comments

Comments are welcome, both in agreement or constructive disagreement about the above. I enjoy hearing from readers and carrying on deeper discussion via comments. Thanks in advance!